Pry

LLM Interpretability Explorer

Language models are black boxes. You give them text, they predict the next word, and nobody really tells you what happened in between. Pry runs a small transformer on your machine and gives you tools to actually look inside. Every visualization comes from the real model weights, live, not some pre-baked dataset or summary that somebody else decided was interesting.

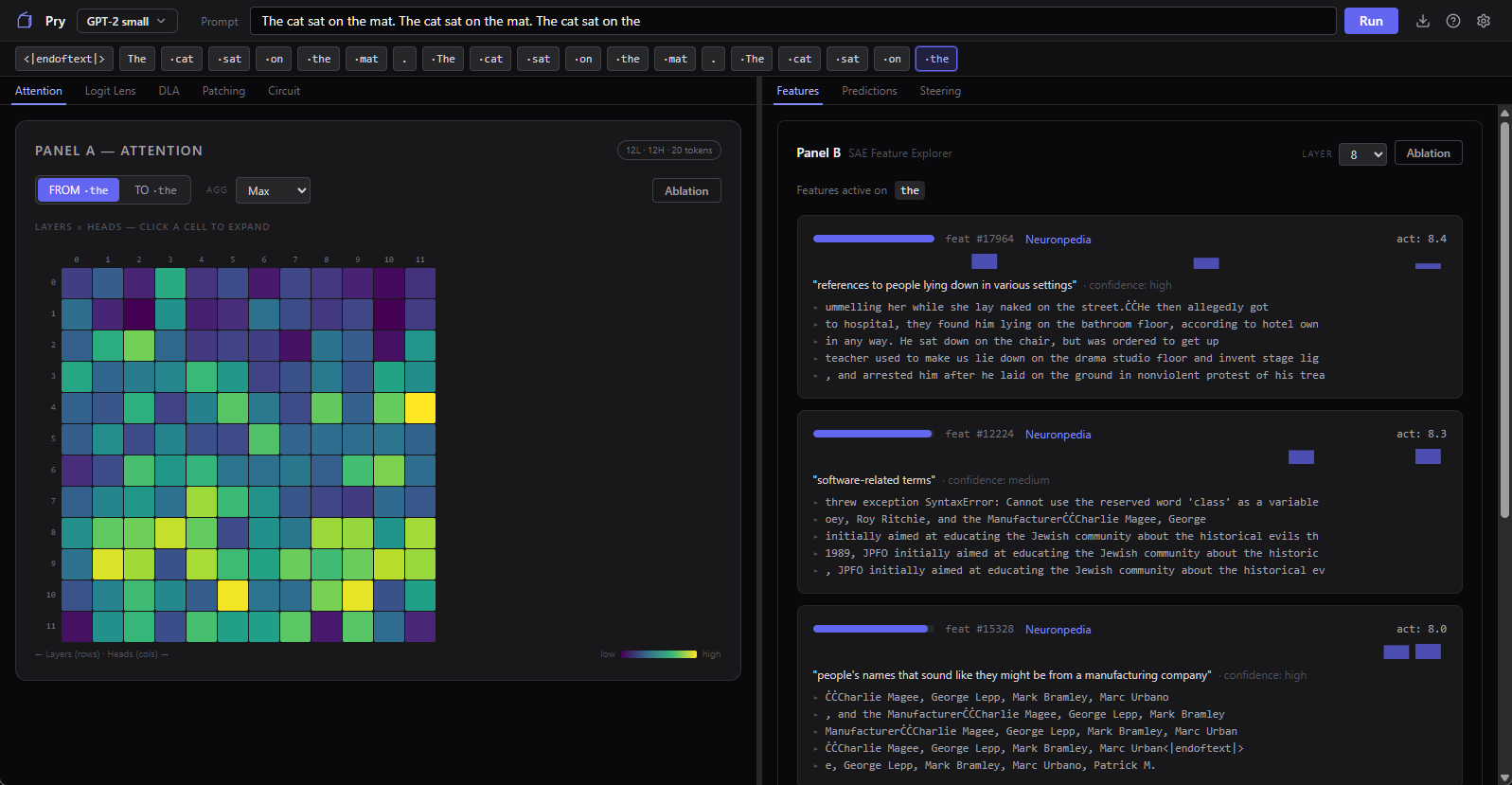

You type a prompt and Pry shows you what the model predicts, which tokens it was paying attention to, what internal concepts fired, and how its answer changed layer by layer. Then you can mess with it. Steer a feature, knock out an attention head, swap activations between two prompts, and see what breaks.

What You Can See

- Next-Token Predictions — ranked list of the model's top guesses for the next word, with confidence scores

- Attention Heatmaps — which earlier tokens the model was looking at when it made each prediction

- SAE Features — the model has thousands of internal "concepts" (things like "past-tense verb" or "professional role") that sparse autoencoders can surface. Pry shows them with activation strengths and Neuronpedia links.

- Logit Lens — what the model would've predicted at each intermediate layer, so you can watch it go from noise to a coherent answer one layer at a time

- Direct Logit Attribution — which specific attention heads and MLP layers pushed for or against the predicted token. Basically the "why did it say that" panel.

What You Can Do

- Feature Steering — dial a SAE feature up or down and watch the output change in real time

- Ablation — zero out an attention head and see if the model still gets the answer right

- Activation Patching — swap pieces of one prompt's internals into another to find which components are actually doing the work

- Circuit View — trace the path from input through attention heads and MLP layers to the final prediction

Runs on Your Machine

Model weights download automatically on first launch (about 2 GB), the Python sidecar handles all the inference and interpretability stuff locally, and nothing phones home. You don't need a terminal, you don't need to set up a Python environment, and you don't need to know what a Jupyter notebook is. Install it and start poking around.

Supported Models

GPT-2 Small and Pythia-70M right now. Small enough to run interactively on a normal GPU, complex enough to show real transformer behavior. Gemma-2B and Gemma-9B are on the roadmap.

Learn As You Go

There's a built-in tutorial that walks you through two demo prompts, explaining every panel as you go. Tooltips throughout the app cover what each visualization means and why you'd care. You don't need a background in mechanistic interpretability to start using this, the app sort of teaches you the concepts as you poke around.

- Stack

- Tauri 2 (Rust), SvelteKit, Python (TransformerLens, SAELens, PyTorch), Tailwind CSS

- Models

- GPT-2 Small, Pythia-70M (Gemma-2B/9B planned)

- Platform

- Windows 10+ (NVIDIA GPU with 4+ GB VRAM recommended)

- Runtime

- Local-only — Python sidecar, no server dependency, auto-downloads model weights

- Status

- v0.1.0-alpha (open source)

Pry is part of the broader AI Content Handling Research effort — understanding how models behave requires understanding what they're doing internally.

View the research →Open Source