Projects

Live

Ongoing Research

In Development

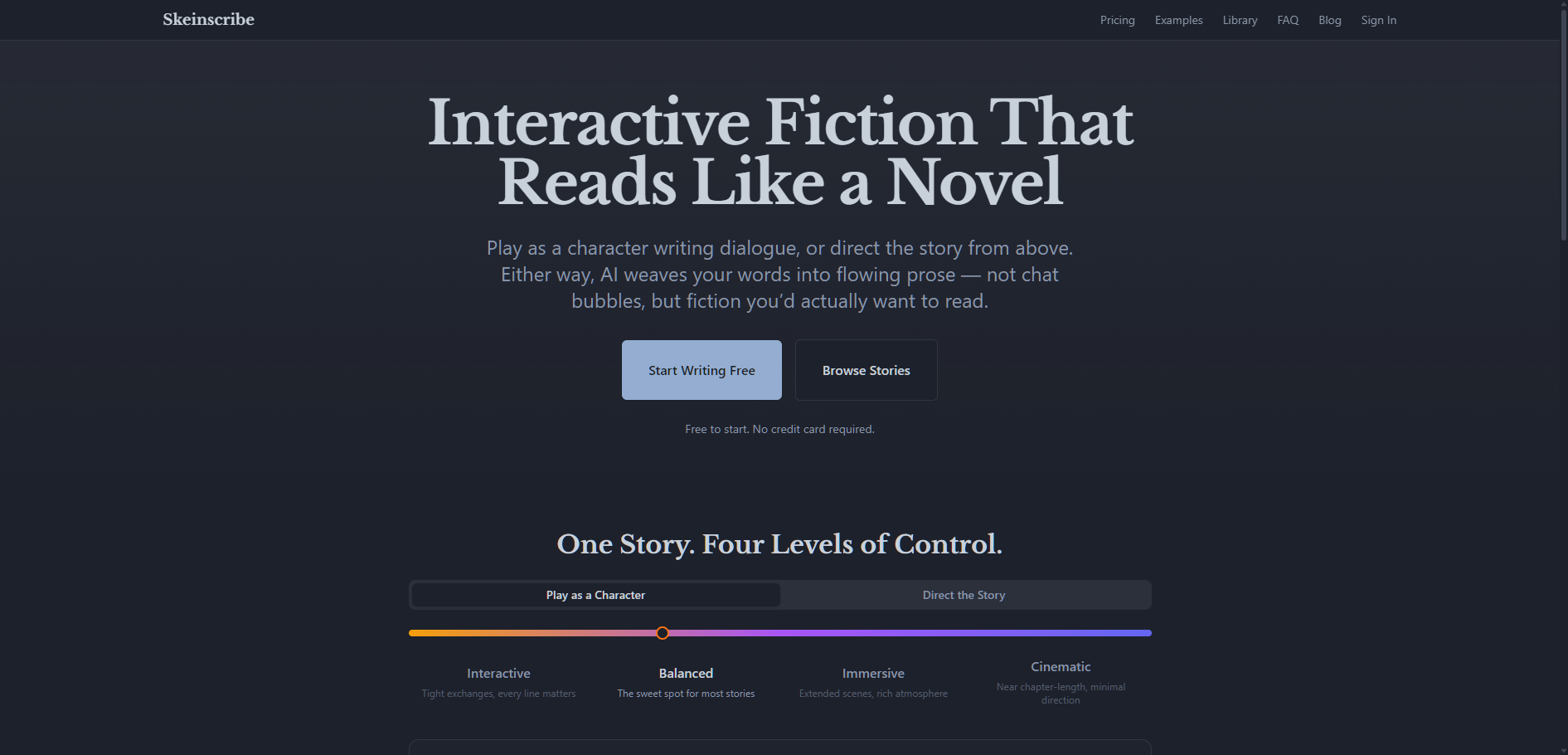

AI Solo TTRPG Game Master

Quest Engine

Solo TTRPG with an AI game master that actually runs the game — dice, NPCs, mysteries. Starting with Kids on Bikes.

AI Whiskey Sommelier

SommBot

Describe what you enjoy and get whiskey recommendations that actually make sense — from an AI with opinions about barrel aging.

AI Kink Education Platform

Parlé

Kink education in private. Figure out what you're into, practice negotiation, learn the vocabulary — before the stakes are real.

Open Source

AI Safety Research Tool

flinch

AI content restriction research tool. Test how models handle sensitive content probes across providers with pushback coaching and structured data export.

LLM Interpretability Explorer

pry

Local-first desktop app for exploring what small transformer LLMs are doing inside. Attention visualization, logit lens, SAE features, and more.

Local AI Image Generation

blink

Dead-simple local AI image generation. Type a prompt, get an image. No Python, no node graphs. Supports SD 1.5, SDXL, Flux, and more.

Claude Code Plugins

beargle-plugins

A curated collection of Claude Code plugins. Install individually or browse the repo.

Loading plugins…